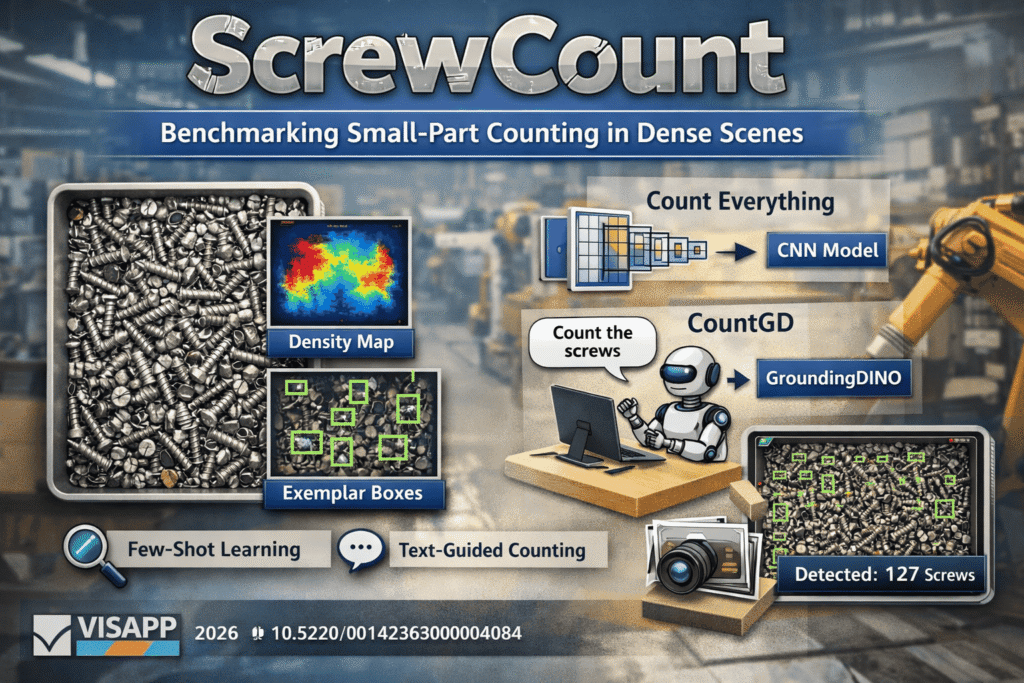

In industrial environments, counting small parts such as screws and nuts is more challenging than it seems. Objects are often tiny, densely packed, overlapping, and visually similar, which makes manual counting slow and traditional detection methods unreliable. ScrewCount was created to address this challenge. It serves as both a dataset and a benchmark for industrial object counting, with support for exemplar-based few-shot counting and text-guided counting.

Why this Problem Matters

In production and inspection workflows, companies often need an accurate count of parts in trays, bins, or work areas. Unlike standard detection tasks, these scenes usually contain many small and tightly packed objects that are difficult to separate visually. Most existing counting benchmarks focus on crowds, cells, or natural scenes. ScrewCount helps fill the gap by focusing on dense industrial scenes with screws and nuts.

What ScrewCount Includes

ScrewCount includes high-resolution 3024×4032 images of screws and nuts, with 1,000 training images and 200 test images. The objects vary in size, shape, and color, and each sample can include RGB images, point annotations, density maps, and up to 6 exemplar boxes. The project also explores two counting approaches built on top of the dataset. Count Everything is a CNN-based method for training and inference, while CountGD uses GroundingDINO for text-guided counting. Together, these elements make ScrewCount useful as both a dataset and a benchmark for comparing different industrial counting methods.

Why ScrewCount is Useful for Industry

For industrial AI teams, ScrewCount offers a more realistic benchmark for small-part counting. It helps evaluate how models perform in dense scenes with limited annotations and repetitive-looking objects.

This opens the door to more flexible applications in quality control, tray inspection, bin counting, and automation pipelines where fast and reliable part counting is needed. It also helps researchers evaluate whether exemplar-based methods or text-guided methods are better suited for real production constraints.

Open and Accessible

ScrewCount is publicly available in our Hugging Face Dataset, and the official implementation is also available on our GitHub Repository. This makes it easy for researchers and engineers to explore, test, and build on the benchmark. If you are interested in learning more about the approaches and how the dataset is collected, you can read our publication related to this benchmark here.

Conclusion

ScrewCount is a focused benchmark for one of the most practical yet overlooked problems in industrial vision: counting many small, similar-looking objects in dense scenes. By combining high-resolution images, point annotations, density maps, exemplar boxes, and both exemplar-based and text-guided counting approaches, it creates a strong foundation for research and deployment in manufacturing-oriented counting tasks.

As industrial AI systems move beyond simple detection and toward flexible scene understanding, benchmarks like ScrewCount will play an important role in measuring what really works in production-like conditions.